News

/Archive

/V7 Labs raises $3 million to empower AI teams with automated training data workflows

V7 Labs raises $3 million to empower AI teams with automated training data workflows

December 18th, 2020

London, UK - 18 December 2020 - V7 Labs, the computer vision platform trusted by hundreds of customers including Tractable, GE Healthcare and Merck, today announced the closing of a $3M Seed round led by Amadeus Capital Partners, joined by Partech, Air Street Capital, and Miele Venture.

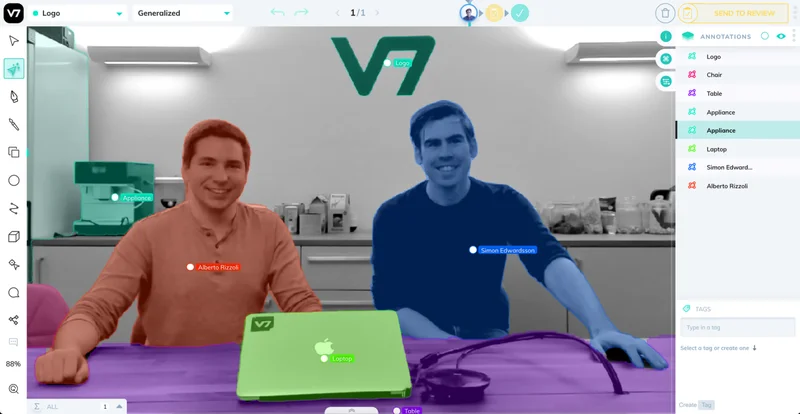

Founded in 2018 by Alberto Rizzoli and Simon Edwardsson, V7 Labs's platform accelerates the creation of high-quality training data by 10-100x. V7 accomplishes this by giving users the ability to build automated image and video data pipelines, organize and version complex datasets and train and deploy state-of-the-art vision AI models while managing their lifecycle in production.

Data-first approach to building robust AI systems

AI is proliferating throughout industries and requires companies to continuously collect, organise and label image data so that AI models may adapt to new scenarios, camera models or objects. Previously, AI companies would build these systems in-house, having to maintain them alongside rapid developments in machine learning. They are now switching to V7, which has condensed into a single SaaS platform best practices for organising datasets, labelling them autonomously and monitoring the status of AI models.

The ML-Ops opportunity

“The story of the last 5 years for AI-first companies has been one of rapidly evolving tooling and infrastructure best practices. The very best AI-first companies are now moving from their home-grown data annotation, versioning and model lifecycle management tools to V7’s SaaS platform because it abstracts this complexity and exposes industry best practices. Companies can now iterate faster and have confidence in the robustness of their production models by building on V7.” Nathan Benaich, General Partner, Air Street Capital.

For machine learning companies to grow, the task of labelling data is the tip of the iceberg. Once an AI training image is labelled, the information representing "what it teaches" is stored as a faceless code file. The world's best AI teams know their data through and through, and want to inspect each training and test sample to identify bias, outliers and over/under representation.

V7 enables monitoring this data's life cycle, as it gets captured, automatically labelled using AI or humans, and sent off to further improve an AI's learning.

V7's largest areas of growth is in healthcare data, thanks to its support for medical imaging, whilst the largest by volume are expert visual inspection tasks, ensuring that power lines, oil pipelines and other integrity-critical infrastructure do not present rust, leaks or damage.

Next year, V7 will expand its team and feature-set to support the success of its customers.

Automating annotation

V7's platform offers a uniquely powerful capability called Auto-Annotate, which can segment any object to turn it into an AI-learnable annotation. Auto-Annotate is trained on over 10 million images of varying nature, curated by V7 to be of high accuracy. This way it can work on anything ranging from medical images like x-rays, to satellite imagery and damaged cars.

Auto-Annotate is the most accurate AI for automated segmentation of any arbitrary object, recently benchmarked on popular computer vision datasets and performing 96.7% IoU score on Grabcut using 2 clicks, and 90.6% on the DAVIS challenge with 3.1 clicks.

"If the proliferation of ML has taught us anything, it is that turning your AI data's lifecycle into a flexible end-to-end workflow is key. This is exacerbated by the growth of deep learning models in production and an increasing demand for automation. V7 is building the finest platform for training data out there, with built-in AI models that outclass the previous state-of-the-art. We're excited to back the team as it brings its new product releases to market." Pierre Socha, Partner, Amadeus Capital Partners.

https://www.youtube.com/watch?v=SvihDSAY4TQ&feature=emb_title

Auto-Annotate, which runs in the cloud 24/7, produces the equivalent output of 500 full-time labelling workers on an average day.

A digital pathology image used in cancer research, residing on V7.

The platform enables 12 types of annotation and unique scientific data formats, like DICOM medical imaging, SVS (used in digital pathology, which can reach sizes of 100,000 pixels squared) and 4K video. Machine learning teams can add hundreds of polygon annotations to represent objects in their training data on V7, which can hold over 2 million data points in a single image without lag on a common web browser.

Dataset inspection and project management - an unseen need

V7 gives the chance to ML teams to search through million-image datasets for their image data to visualize their labels. Datasets can be versioned to exclude potentially biasing samples, and every label added is logged to keep an accurate record of the evolution of training data.

The dataset management also enables customizable flows through which each image will go, as humans or AI models apply labels, before an image can be considered complete and ready for training. All datasets start with a largely human-driven annotation flow, and automate introducing AIs as the training data expands.

AI model management

Models learn from datasets. If they are close to where these reside, they can continually learn from new data, and point out any training images that have skewed their performance over time. This new paradigm for training AI allows for teams working on accuracy-critical models to learn when and why an AI will fail. There is much more to come for V7 users in 2021 to bridge the gap between training data and AI model performance. Today V7 allows teams to train and run deep learning models in the cloud on managed GPU servers, removing the need for dev-ops work.

The name "V7"

V7 was named after the areas of the visual cortex. During the Cambrian explosion 500 million years ago, life developed sight, which it continued to refine throughout epochs of evolution, accelerating its diversity of skills. The human brain has six distinguishable areas: V1 through V6. Rizzoli and Edwardsson want to create a hypothetical 7th area meant for machines, and bring forth a similar burst of evolutionary capabilities to those of when life began to see.

About Amadeus Capital Partners

Amadeus Capital Partners is a global technology investor. Since 1997, the firm has raised over $1bn for investment and used it to back 165 companies. With vast experience and a great network, Amadeus’ team of investors and entrepreneurs share a passion for the transformative power of technology.Pioneering businesses we’ve backed include cyber security vendor ForeScout (NASDAQ:FSCT); Graphcore, innovators in intelligent microprocessors; IVF genetic testing company, Igenomix, IndiaMART, the B2B online marketplace (NSE: INDIAMART) and speech recognition company VocalIQ (acquired by Apple). Find us at amadeuscapital.com and @AmadeusCapital.

About Air Street Capital

Air Street Capital is a venture capital firm investing in AI-first technology and life science companies. We’re a team of experienced investors, founders, and senior leadership from companies including Google, Niantic, Lyft, Facebook, Apple, and DeepMind. We work with entrepreneurs across Europe and the US from the very beginning of their company building journey. Our portfolio includes Allcyte, Anagenex, Graphcore, Intenseye, LabGenius, Mission Barns, and V7 Labs, and ZOE. Learn more with our monthly industry analysis newsletter, your guide to AI, our annual State of AI Report, and on our website.

About Partech

With a portfolio of almost 180 companies spread across 30 countries in Europe, the US, Africa, and Asia, Partech has been one of the leading international investors helping visionary founders for almost 40 years. The Partech team – made up of both former entrepreneurs and executives from 15 different countries – brings capital, experience, strategic support, and networks to entrepreneurs at every stage of development: seed, venture, and growth. With over €1.5B under management, Partech invests from €200K to €50M in B2B and B2C technologies reshaping industries. Companies backed by Partech have completed more than 21 IPOs and more than 50 strategic M&A transactions valued over $100M.

For more info: www.partechpartners.com

About Miele Venture Capital

Miele Venture Capital GmbH supports fledgling companies with promising ideas and technologies seeking a committed and financially strong partner. Thematically, Miele Venture Capital Co. Ltd focusses on creative solutions which are compatible with Miele products, services, value chains, business models and manufacturing processes. Forms of co-operation range from joint development projects and management support through to direct equity stakes.

SUBSCRIBE TO OUR NEWSLETTER